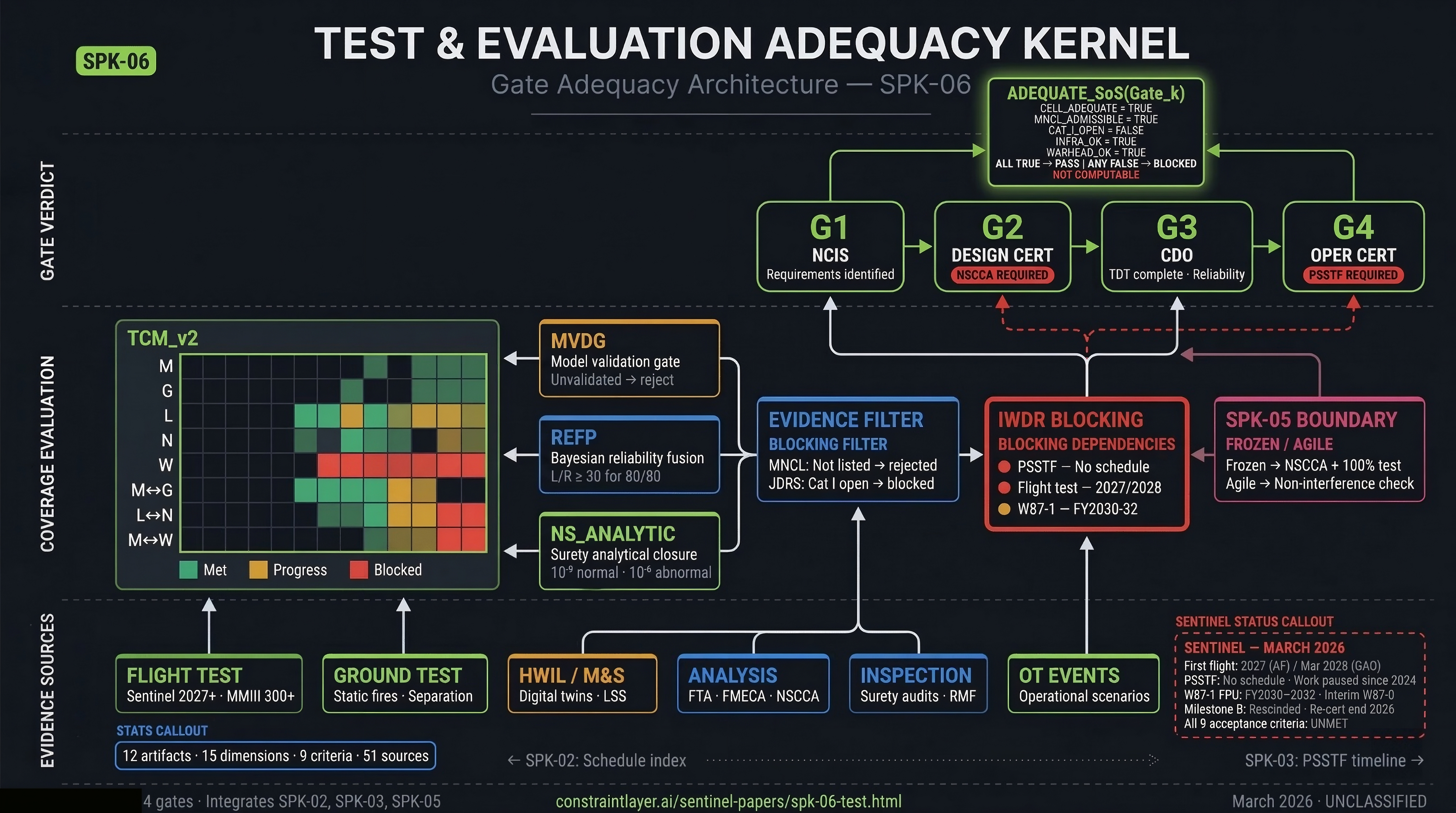

Sentinel must achieve the verification confidence of 300+ Minuteman III flight tests[12] with roughly 25 test missiles.[13] DOT&E warns digital twins cannot substitute for live testing.[4] GAO found no schedule for a Sentinel test facility.[2] "Adequate testing" remains an assertion, not a computation. TEA-K makes it computable.

The January 2024 Nunn-McCurdy breach[1] exposed gaps beyond cost. When the first Sentinel flight test slipped from late 2023 to 2027,[7] no framework existed to quantify which certification claims were invalidated or which evidence chains broke.

Three crises converge: the model validation gap (digital twins without flight data), the test facility void (no schedule for security testing infrastructure), and the warhead integration paradox (W87-1 needs Sentinel data; Sentinel needs W87-1). TEA-K addresses all three with explicit, testable artifacts.

The December 2022 Selected Acquisition Report[14] reveals a stark split. Missile subsystem reviews succeeded; ground infrastructure reviews failed.

Completed 2022 CDRs: Stage 1, 2, and 3 Booster Thrust Vector Control CDR (March 29–30, 2022, including Honeywell TVC system). Single Board Computer Developmental Unit CDR (April 26, 2022). KS-75/Cryptographic Unit Test Station CDR with Sandia National Laboratories (August 23–24, 2022). First Level 3 CDR for 1/2 and 2/3 Interstages (November 1–2, 2022).

Failed: Ground Segment PDR — DoD determined the program "was not at a Preliminary Design Review level of maturity at the Milestone B," identifying this as a root cause of cost overruns.[1]

The program had a rocket advancing through design reviews but unresolved ground infrastructure. That asymmetry persists into 2026.

Minuteman III reliability was built on more than 300 Glory Trip test launches since 1970,[12] with the most recent (GT 255) on March 3, 2026. Sentinel has 25 EMD/test missiles from a total buy of 659.[13] That is roughly 1/12th the statistical base.

Demonstrating high reliability through flight testing alone requires large sample sizes. The binomial relationship — n ≥ ln(1−C) / ln(R) — means that for typical targets, tens of successful flights are needed with zero failures. With 25 test units split between developmental and operational testing, pure frequentist demonstration is insufficient.

TEA-K assesses which MMIII data can inform Sentinel reliability based on design commonality. This is a TEA-K analytical output — no published DoD transferability analysis exists in unclassified literature.

| Subsystem | Transferability | Rationale |

|---|---|---|

| W87-0 warhead | HIGH | Same warhead; decades of surveillance data |

| Mk21 RV aero | HIGH | Well-characterized; wind tunnel + flight data |

| Propulsion | NONE | Steel cases (MMIII) vs. composite (Sentinel); different chemistry |

| Guidance | NONE | Analog (MMIII) vs. GPS/modular (Sentinel) |

| Ground systems | NONE | Copper HICS vs. fiber optic |

Propulsion, guidance, and ground system reliability must be established almost entirely from new Sentinel data.

DOT&E's FY2024[4] and FY2025 annual reports[5] flag the central risk: digital twins "aid, but do not obviate" live testing. Without physical flight test calibration, models remain unvalidated constructs that may diverge from reality.

HWIL can simulate 10,000 flights in a week. HWIL cannot simulate stage separation, pyrotechnic shock, vibration, or reentry plasma. The first Sentinel flight test is targeted for 2027 by the Air Force,[7] though GAO projects no earlier than March 2028.[3]

Propulsion testing has advanced substantially, providing partial model calibration:

| Test | Date | Significance |

|---|---|---|

| Stage-1 qual static fire[15] | Mar 6, 2025 | Promontory, UT — validated digital models |

| Stage-2 qual static fire (vacuum)[16] | Jul 20, 2025 | Arnold Engineering Dev Complex |

| Stage-3 development tests | Pre-Mar 2025 | Completed prior to Stage-1 qual |

| PBPS hot-fire[17] | 2024–2025 | Liquid propulsion (distinct test surface) |

| Interstage separation tests | 2024–2025 | Physical separation validation |

| Launch Support System CDR[39] | Sep/Oct 2025 | Digital twin facility — generates virtual launches |

These anchor propulsion and separation models. Trajectory, reentry, and full-system integration models remain USED_UNVALIDATED until first flight.

The Model Validation Dependency Graph (MVDG) is a directed graph where nodes are models and physical tests, and edges encode calibration relationships. For each TCM cell citing M&S evidence: if M&S_STATUS = USED_UNVALIDATED and no MVDG edge exists for the relevant regime, the cell cannot claim adequacy — it is marked "preliminary assessment only." After first flight, models are calibrated against flight data, residual error bounds are computed, and M&S_STATUS updates to USED_VALIDATED for calibrated regimes.

The Launch Support System generates thousands of virtual launches but is classified as MODEL_DERIVED in the reliability framework — lower weight than physical test data, requiring cross-validation before being given full weight.

GAO-25-108466[2] (September 2025) found that the Air Force "has not developed a schedule for construction of a Sentinel test facility." GAO-26-108755[3] (February 2026) reinforced this, recommending that the Air Force complete "Sentinel launch facility test and evaluation activities early in the transition."

The Physical Security Systems Test Facility is essential for:

| Function | What It Tests | What It Blocks |

|---|---|---|

| Delay & Denial | Adversary breach time vs. Design Basis Threat | Gate 4 (Operational Cert) |

| RVA Sensors | Detection in all weather (blizzard, fog) | Gate 4 |

| Two-Person Concept | Physical layout enforces nuclear security rules | Gate 4 |

| TO Validation | Maintenance procedures executable by target workforce | Gate 4 |

Without this facility, Operational Certification cannot complete. TEA-K encodes this as an explicit blocking condition: INFRA_OK = FALSE for affected rows until PSSTF functions are validated.

Sentinel faces a circular dependency between missile and warhead certification:

| Milestone | Date/Status | Source |

|---|---|---|

| Phase 6.3 entry | May 2023 | NNSA[21] |

| First war reserve pit qualified | October 2024 | NNSA[22] |

| Mk21A RV flight test | June 2024 | Lockheed Martin[23] |

| First Production Unit | FY2030–FY2032 | NNSA / CRS |

Sentinel will initially deploy with W87-0 warheads in Mk21 RVs.[13] This requires delta certification (proving W87-0 survives Sentinel's flight environment) and a critical dependency on the ICBM Fuze Modernization program[24] — the replacement Arming and Fuzing Assembly managed by USAF/NNSA with Sandia as technical design agent.

Additionally, Sentinel's confirmed MIRV upload capability requirement[7] means the Post Boost Vehicle's multi-RV interface logic must be tested across single and multiple warhead configurations.

DoDI 3150.02[27] (reissued December 17, 2024) defines four nuclear surety standards. The reissued instruction explicitly integrates cybersecurity, supply chain risk management, and emerging technology threat assessment into the nuclear surety program framework — elevating cyber to peer status with physical surety.[28] DAFMAN 91-118[10] specifies the probability thresholds:

These probabilities are impossible to demonstrate through testing. Demonstrating 10⁻⁹ empirically would require billions of test-hours. This creates the fundamental bifurcation that TEA-K enforces:

| Surety Type | Verification Method | TCM Column | Coverage |

|---|---|---|---|

| Deterministic (stronglinks, weaklinks, ESDs) | Physical test | NS_DET | 100% |

| Probabilistic (10⁻⁹/10⁻⁶ targets) | Analysis (FTA, FMECA) | NS_ANALYTIC | All minimal cut sets bounded |

Nuclear weapon safety is implemented through four design themes:[30] Isolation (exclusion region barriers prevent energy from reaching the nuclear explosive), Incompatibility (stronglinks respond only to a Unique Signal incompatible with natural phenomena), Inoperability (weaklinks fail safe at lower stress than stronglinks — in a fire, the weaklink breaks the circuit before the stronglink can be bypassed), and Independence (dual stronglinks with different UQS patterns, so no single failure compromises both).

Environmental Sensing Devices (ESDs) detect valid launch signatures — acceleration, spin, barometric pressure — before the stronglink accepts UQS. They function as environmental gates: the trajectory UQS is generated only after ESD verification of launch-like conditions.[34]

These features are deterministic — they either work or they don't, and they must be tested physically. The 10⁻⁹ probability is verified analytically by ensuring no minimal cut set in the fault tree can bypass these deterministic barriers. That is why TEA-K separates NS_DET (test 100% of safety features) from NS_ANALYTIC (bound all fault paths analytically).

Sandia National Laboratories[31] maintains unique facilities for qualification:

| Test | Parameters | Facility | Objective |

|---|---|---|---|

| Lightning | 200 kA, 1–5 μs rise | Sandia Lightning Simulator | Verify Faraday cage shunts strike to ground |

| Fire | 800–1200°C, 75–250 kW/m² | FLAME[32] | Verify weaklink fails before stronglink |

| Crush/Shock | Rocket sled to barrier | Rocket Sled Track[33] | Verify contacts don't force closed |

DAFMAN 91-119[29] governs the Nuclear Safety Cross-Check Analysis. Having reviewed the full text:

Verified requirements: NSCCA must be performed by an independent organization. Software must provide protection against "deliberate unauthorized operation of critical functions" — explicitly addressing malicious intent. Each critical routine must have a single entry point with complete exit paths. Evaluated code must correspond unambiguously to the executable.

Commonly attributed but NOT in the public text: "100% path coverage" (no coverage metrics specified), "object code verification" as a separate step (the instruction requires unambiguous code correspondence, not a distinct verification), "model-to-code verification" as a mandate (the instruction permits model-level evaluation under conditions, not mandates it).

These specifics may exist in classified implementation guidance. TEA-K uses the instruction's actual text as the verified baseline.

Nuclear certification follows a formal gate structure per AFI 63-125[25] and DAFMAN 63-119.[26] TEA-K binds adequacy predicates to each gate:

Critical sequencing: Design Cert must be substantially complete before CDOT. CDOT must be issued before dedicated OT can begin. OT results feed Operational Cert. NSCCA completion is required for Design Cert on all safety-critical software.

Completion of Gate 4 results in the item being listed on the Master Nuclear Certification List (MNCL) — the sole authority[25] for nuclear-certified items, maintained by AFNWC.

Three distinct pillars produce different evidence types. Certification requires all three:

| Pillar | Authority | Key Question | Governed By |

|---|---|---|---|

| DT | USD(R&E) | Built correctly? | DoDI 5000.89[8] |

| OT | DOT&E | Right capability? | DoDI 5000.98[9] (Dec 2024) |

| N_CERT | AFNWC | Safe for nuclear? | AFI 63-125 / DAFMAN 91-118 |

TEA-K synthesizes outputs from established frameworks into a unified adequacy contract. No new T&E methodology is invented — the novelty is in the integration.

Test Coverage Matrix: 15 columns × subsystem/interface rows. Every cell has a threshold, evidence list, pillar tag, and M&S validation status.

Probabilistic surety closure: FTA minimal cut sets, probability bounds, NSCCA status per software component.

Model Validation Dependency Graph: physical test anchors for every M&S model. Unvalidated models cannot support certification.

Reliability Evidence Fusion Plan: Bayesian framework with explicit prior transferability classification (HIGH/NONE).

4-gate binding to AFNWC certification structure. Each TCM cell has PARTIAL_BY and CLOSED_BY gate assignments.

Infrastructure/Warhead Dependency Register: explicit blocking conditions for PSSTF, W87-0/W87-1, and ICBM Fuze Modernization.

Hard gates: MNCL compliance, JDRS Category I deficiency resolution, evidence provenance with crypto integrity tags.

MIL-STD-1553/1760 fault types: parity, sync, Manchester, truncation, superseded commands. 100% for safety-critical SPFAs.

MIL-STD-188-125: Appendix A (shielding), B (PCI), C (CWI). HM/HS baseline and lifecycle program.

DT/OT/N_CERT pillar tags for every evidence item. Prevents conflation of developmental and certification evidence.

RMF ATO + Nuclear Surety Overlay + adversarial testing (ACDT). Digital isolation verification for shared-silicon processors.

E-6B ALCS legacy verification. Nov 5, 2024 test demonstrated ALCS commanding MMIII.[37]

MIL-STD-1553/1760 Fault Injection[36] — standard fault types that FAULT_INJECT_SPEC requires for data/control interfaces:

| Fault Type | Mechanism | Expected Response |

|---|---|---|

| Parity Error | Invert parity bit | RT suppresses response; BC records No Response |

| Sync Field Corruption | Distort 3-bit sync | Decoder fails to sync; word discarded |

| Manchester Error | Violate bi-phase encoding | Hardware rejects as noise |

| Superseded Command | Send command before prior processed | RT sets Busy bit |

| Word Truncation | Incomplete transmission | System enters safe state |

MIL-STD-188-125[35] — three primary EMP/HEMP test methods: Appendix A (Shielding Effectiveness — electromagnetic barrier integrity, 10 kHz to 1 GHz), Appendix B (Pulsed Current Injection — high-amperage pulses on power/signal/data lines simulating E1, E2, E3 waveforms), Appendix C (Continuous Wave Immersion — RF illumination to find coupling paths and shield defects). Hardness Maintenance/Surveillance programs prevent degradation over the system lifecycle.

Gate passage is a computed state, not a committee opinion. If any condition evaluates FALSE, the gate cannot pass.

CELL_ADEQUATE — the per-cell test:

NS_ANALYTIC_CLOSED — probabilistic surety closure:

CY_SURETY_ADEQUATE — cyber-surety overlay:

MNCL_ADMISSIBLE — configuration compliance:

These four sub-predicates decompose the master predicate into individually testable conditions. Each can be evaluated independently — and each can independently block gate passage.

All TEA-K components use mature technologies (TRL 8–9): structured databases, Bayesian statistics per MIL-HDBK-189C, graph databases for MVDG, and operational systems (MNCL, JDRS). No component requires research-grade development.

| Phase | Timeline | Key Activities |

|---|---|---|

| Phase 0: Specification | Months 1–6 | Draft TEA-K conformance spec. Define TCM_v2 structure. Document NS_ANALYTIC_LEDGER. Establish REFP priors. Set IWDR blocking conditions. Coordinate with AFNWC and Sentinel PMO. |

| Phase 1: Validator | Months 7–12 | Implement AC1–AC9 validators. Build Evidence Ledger with provenance tracking. Construct MVDG with 2025 propulsion/separation test linkages. Integrate with MNCL/JDRS. |

| Phase 2: Pre-Baseline | Months 13–24 | Populate TCM_v2 with Sentinel data. Track MVDG as flight test approaches. Monitor IWDR dependencies. Iterative conformance checks against Milestone B proposal. |

| Phase 3: Operational | Months 25–36 | Continuous validation through DT/OT. Gate reviews using TEA-K predicates. MVDG update as flight data accumulates. REFP posterior updates. |

Critical dependency: MVDG transitions from largely UNVALIDATED to partially VALIDATED only after first flight test. If the 2027 target slips, Phase 2 extends accordingly. TEA-K's binding to IDX_FIRST_FLIGHT_TEST rather than a calendar date ensures this propagation is automatic.

| Risk | Likelihood | Impact | Mitigation |

|---|---|---|---|

| MVDG calibration insufficient from early flights | Medium | High | Additional flight tests; LSS cross-validation; phased MVDG update |

| NSCCA capacity constraints | High | Medium | Early contractor engagement; prioritize SURETY_CORE scope |

| PSSTF remains unresolved | High | Critical | Compensatory measures; accelerate facility planning; GAO recommendation tracking |

| W87-0 STS envelope exceedance | Medium | High | Dampening hardware; delta testing; early envelope mapping |

| GAO/AF flight test timeline disagreement | Medium | High | Plan for both dates; bind to index, not calendar |

| Multicore interference on shared-silicon processors | Medium | Medium | Formal analysis per SPK-05 flag; isolation verification |

| Gap | TEA-K Response | Validation |

|---|---|---|

| "Adequate testing" undefined | TCM_v2 with 15 coverage dimensions | AC1–AC9 |

| M&S unvalidated | MVDG physical test anchoring | AC3 |

| Surety test/analysis conflated | NS_DET + NS_ANALYTIC bifurcation | AC5 |

| Reliability statistics inadequate | REFP Bayesian framework | AC9 |

| Gate requirements unclear | CERT_GATE_MAP to 4-gate structure | Gate predicates |

| Infrastructure dependencies hidden | IWDR blocking conditions | AC8 |

| Evidence integrity assumed | MNCL + JDRS + provenance | AC6, AC7 |

Path Forward: TEA-K must be established during the current restructuring period. Milestone B re-certification is expected end of 2026.[6] Once a new baseline is approved without TEA-K conformance, the opportunity closes until test inadequacy forces another review.

SPK-05's frozen/agile boundary is TEA-K's biggest input. SURETY_CORE surfaces require deterministic verification + NSCCA. AGILE surfaces accept streamlined checks. Without this boundary, TEA-K cannot set coverage requirements.

SPK-04 defines interface contracts (message formats, timing, epochs). TEA-K verifies they work. If SPK-04's interface contract changes, TEA-K's coverage matrix changes. E-6B ALCS backward compatibility flows through both.

PSSTF is a blocking condition. You cannot test physical security for 450 new silos without a facility. SPK-03's production timeline determines when PSSTF exists. Until it does, TEA-K has an open gate it cannot close.

TEA-K binds to IDX_FIRST_FLIGHT_TEST in SPK-02's coupling matrix. MVDG validation and REFP posterior updates depend on flight test timing. If flight test slips, M&S evidence stays unvalidated.

NSCCA creates workforce demand that SPK-07 sizes. If insufficient NSCCA contractor capacity exists, SPK-06's Class 2 verification queue backs up, delaying Design Certification.

NSCCA contractor costs, test facility construction, and additional delta testing for W87-0 exceedances all feed UC-BCK. Cost of testing is real but flows indirectly through schedule.

TEA-K must satisfy nine criteria to provide computable test adequacy. All remain unmet as of March 2026.